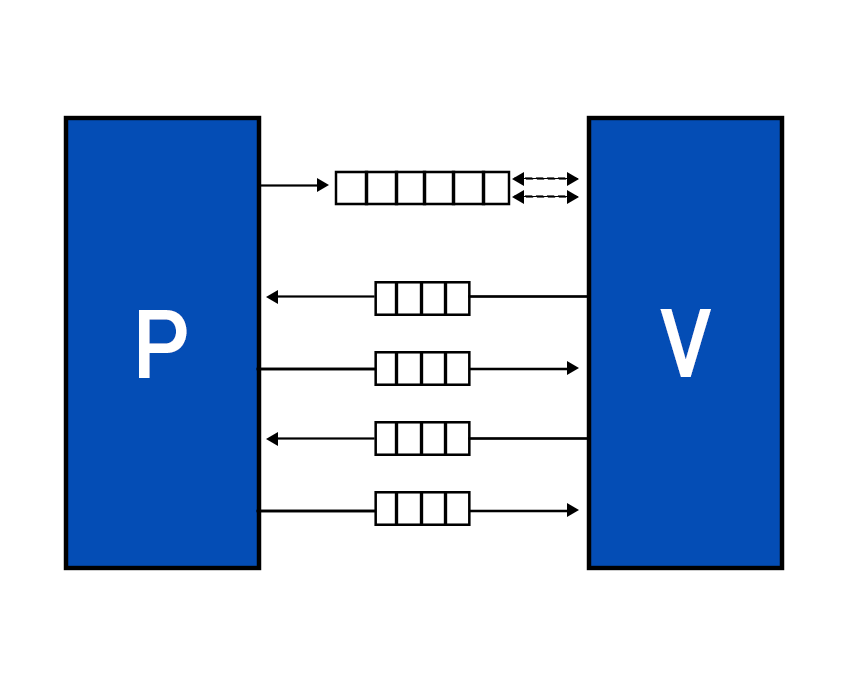

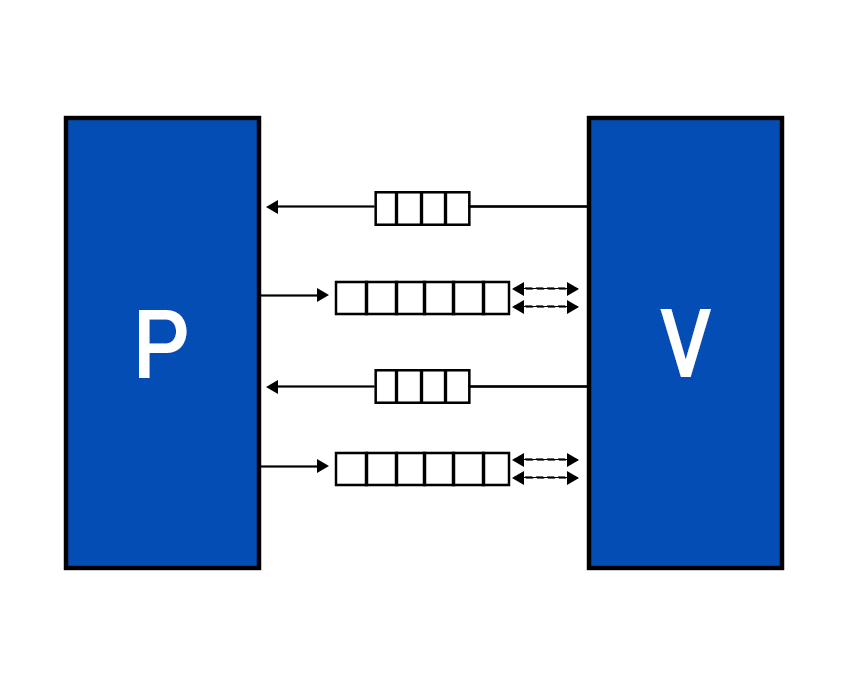

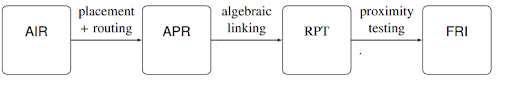

STARKs revolves around the idea of computational integrity, which essentially means that the output of a specific computation is correct. In traditional financing, where banks are involved, trusted third parties such as auditors, accountants, etc ensure their integrity. Whereas in the crypto world, the integrity of computed data is verified by reading the whole proof. This proves to be a naive action. Furthermore, if you were to prove to someone that you are over 18, you are most likely to provide your ID card, which means sharing more information than required. This is not ideal and zero-knowledge proofs can take care of it. Initially, Zero-knowledge system constructions were based on PCPs or probabilistically checkable proofs. Prover: An algorithm that receives an input x and calculates pi for that x. Verifier: A polytime deterministic algorithm that receives an input x and proof pi, then accepts or rejects that pi. Suppose I were to say that in book b, the character c dies. Now b consists of 1087 pages. You may read the whole book, all 1087 pages, to verify the claim. Or you can query someone who read the book, but then the question of their integrity arises. You can ask them multiple times to make sure they claim the same thing everytime, if their answer is false, there is a chance that they slip up once in a while. But the example doesn't do very well in explaining probabilistic checking. The question is, in computation what number of bits does the verifier need to check to accept or reject the claim. The proof may be binary string =(1,2............,n) (0,1).The verifier can query any bit (ith bit) and the prover will provide iin response which counts as one query. How many will satisfy the integrity of the computation? As an NP is a collection of all polynomials with time bounds, the NP-complete problems can be translated into one another; for example, theorems with poly length proofs can be transformed into 3 colors graphs. An NP reads inputs and then accepts or rejects the proof. As randomness in cryptography provides more security, what a PCP verifier does is to read input, toss in some randomness, then read a few bits in proof, and then accept or reject the proof. How many is will satisfy the integrity of the computation? Zero-Knowledge PCPs are known to be quantum secure proofs and are transparent. They have six core attributes Interactive proofs incorporate features of interactiveness and randomness. PCP includes randomness and probabilistic checking. This means that the prover sends a message, and the verifier, instead of reading it entirely, samples it probabilistically, i.e., the verifier has random access to proof string. It can read subsets as per choice. If we combine all three attributes of interaction, randomness, and probabilistic checking, we will get IPCPs or IOPs. In IPCPs, the prover sends a PCP to the verifier, and they subsequently engage in interact proof. In IOPs, again, prover and verifier interact, but now the verifier has the freedom to sample it instead of reading it entirely. IOPs make use of Random Oracles to generate challenges. After receiving the challenge, prover’s generates the PCP, commits it through a Merkle tree, then prover puts the Merkle tree’s root into a hash chain that produces the next randomness. The answer to why use a hash chain is because the prover needs temporal consistency. Multiple PCPs are generated, so the prover needs the proof to line up. In the end, the prover gathers all the requested locations, creates authentication paths, and sends them to the verifier. The verifier makes sure the proofs line up and then checks the answer to accept or reject. An argument is ZK-STARKs if it satisfies certain properties. While STARKs are known as an interactive protocol, the interactivity is eventually replaced by a transformation to a non-interactive system wherein the prover provides proof, and the verifier decides whether to accept or reject it. Suppose we have a polynomial for a proof that works out for several points. STARKs verifier checks the proof probabilistically, which means that the verifier verifies the polynomial for several samples. The problem that arises here is verifier might check 99% of the bit, and the 1% remaining bits might be that evil, malicious bit. The simple answer to this problem is error-correcting codes. Error-correcting codes add redundancy to a computation. We take some short messages and then apply some transformation to make it a much longer code work. Rate parameter measures the amount of redundancy. Rate is the ratio of the number of symbols in the message to the size of code words. So rate = ½ means the amount of added redundancy equals the amount of information. And rate ⅛ implies the number of evaluation points equals 8 times greater than the polynomial degree. Error is proportional to the rate, which gives an exciting tradeoff; decreasing the rate, you get the more minor error, but your prover runs for more time. The redundancy essentially amplifies the error even if it is in one bit, making it easier to detect the error. Read Vitalik Buterin’s blog on proof with polynomials to get more insights on how it works. As we know, that prover needs to generate a polynomial, but it could be any polynomial. So how do we restrict that the polynomial should satisfy certain conditions? In STARKs, the prover and verifier agree to constraints beforehand. STARKs uses AIR, an Algebraic intermediate Representation constraint system, i.e., the constraints expressed as polynomials composed over trace cells. Zk implementations require arithmetization, which is the reduction of the computational problems to algebraic problems. This involves low-degree polynomials over a finite field. The starting point in all proofs is the problem you wish to satisfy computational integrity for. The first point of arithmetization is contributing AIR, algebraic intermediate representation. The AIR to ZK-STARKs process consists of 4 parts. IOPs derived from PCPs are unique to STARKs. In our future publication, we’ll be learning about AIR, APR, RPT, and FRI. E. Ben-Sasson, I. Bentov, Y. Horesh, M. RiabzevScalable. Transparent, and post-quantum secure computational integrity. Mar 2018. A. Chiesa. IOP based Zero-Knowledge Proofs. Apr 2019.Probabilistically Checkable Proofs:

Interactive Oracle Proofs

ZK-STARKs

STARKs Arithmetization Phases

Resources: